A specially trained AI is using data collected from fMRI brain scans to read people’s minds. And while the system must go through some initial training with the person whose thoughts it wants to read, the results are shockingly accurate, if not somewhat haunting.

Of course, an AI system that can read minds could offer some tangible potential benefits for people suffering from certain disabilities. But it also sparks serious concerns about the very nature of privacy, particularly the privacy of one’s thoughts.

The ability to Read People’s Minds is Common in Dystopian Science Fiction

Throughout the history of literature, television, movies, and other media, the ability to read people’s minds is often associated with either magical abilities or technology gone wrong. And in most cases, the end result is not a pretty one.

For example, author Phillip K. Dick envisioned law enforcement gone awry by arresting and convicting criminals for simply thinking about committing a future crime in his 1956 novella “Minority Report.” In 1948, Author George Orwell famously invented the “thought police” for his horrifying view of the world’s techno-future, a book titled, simply, 1984. Even today, the idea of the dreaded “thought police” is often invoked negatively in the public discourse on politics, information, technology, and the media.

Now, a team of Japanese researchers says they set out to determine if a specialized AI tool could be connected to a brain scan machine to accomplish what has only previously existed in this type of fiction; the ability to read people’s minds. And according to a pre-print of their soon-to-be-published study, their results are almost impossible to see and believe.

Diffusion Method AI Pulls Images Right out of People’s Thoughts

To train their mind-reading AI, the research team from Osaka University showed four volunteer subjects a series of 10,000 images while their brains were connected to an fMRI machine. This device allowed the researchers to map minute changes in blood flow within the brain to see which particular areas “light up” in response to which images.

The process was then repeated two more times, with the AI gathering more and more information on brain activity for all 10,000 images across all four subjects. The data was then mapped by the AI onto a format used by other generative models, providing a working dataset for the AI to compare the subject’s thoughts.

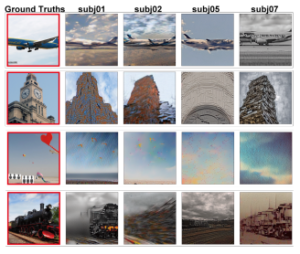

Finally, once the system was adequately trained, the subjects were presented with an image of an object or scene (that was not part of the original data set of 10,000 images) while they were connected to the fMRI. Shockingly, by comparing the flow of blood in the brain to the data from its training data set, the AI system was able to read the brain scan and recreate incredibly accurate images as to what image the person was visaulizing.

One of the researchers on the team, Yu Takagi, who is an assistant professor at Osaka University and a co-author of the paper, said they were actually somewhat surprised at how accurate their AI was a reading people’s minds since it wasn’t really what the makers of the baseline analysis software, a.k.a., the diffusion model tool, had in mind.

“The most interesting part of our research is that the diffusion model—so-called image-generating AI which […] was not created to understand the brain—predicts brain activity well and can be used to reconstruct visual experiences from the brain,” Takagi told Newsweek.

Excitement and Extreme Caution Accompany AI Ability to Read People’s Minds

When discussing potential applications for their approach to mind reading, Takagi noted that while their system was pre-trained on particular images, the more the system is refined, it could potentially pull images right out of people’s imaginations.

“When we see things, visual information captured by the retina is processed in a brain region called the visual cortex located in the occipital lobe,” Takagi explained. “When we imagine an image, similar brain regions are activated. It is [therefore] possible to apply our technique to brain activity during imagination.” Still, the researcher cautioned, it is currently unclear how accurately they would be able to decode such activity.

Ultimately, if the creation of an intelligent machine that can read people’s minds sounds right out of science fiction, that’s because it is. And not the “everything will be great in the future” type of utopian sci-fi like Star Trek, but more like the nightmare scenarios painted by early and mid-20th-century science fiction authors who saw an increasingly-oppressive government using technology to further subjugate their populous for “thought crimes.”

Time will tell which one this turns out to be. But in either case, it appears that the last area of true privacy, one’s own thoughts, may soon fall away under the increasing power of AI. And that, in and of itself, is one scary thought.

Christopher Plain is a Science Fiction and Fantasy novelist and Head Science Writer at The Debrief. Follow and connect with him on Twitter, learn about his books at plainfiction.com, or email him directly at christopher@thedebrief.org.