Welcome to this week’s installment of The Intelligence Brief… with Google’s announcement that it will soon begin opening access to Bard, its newest and most advanced chatbot, we’ll be looking at 1) the company’s announcement about Bard’s early open access phase, 2) the origins of the new Google super-chatbot, 3) why some chatbots offer less than reliable information, and whether Bard will be different, and 4) how the chatbot’s predecessor generated controversy last year, and what that means for Bard going forward.

Sign up to get The Intelligence Brief newsletter sent to your inbox each week.

Quote of the Week

“A year spent in artificial intelligence is enough to make one believe in God.”

– Bernard Marr

Latest Stories: A few of the stories we’ve covered in recent days here at The Debrief include how DARPA announced it is moving forward with plans to develop an entirely new class of flying boat for use in amphibious military operations. Elsewhere, the U.S.A.F. is set to be equipped with a new combat-proven electronically scanned array sensor system, which will enhance both air and maritime sensing capabilities. You can get caught up on all our recent stories at the end of this newsletter.

Podcasts: In podcasts from The Debrief, this week MJ Banias and Stephanie Gerk explore whether we can power electric airplanes with death rays, and MJ tries to run the space junk gauntlet surrounding planet Earth in The Debrief Weekly Report. Meanwhile over on The Micah Hanks Program, I examine several law enforcement encounters with UAP, including a sighting by a police officer in 1976 that became a foundational investigation for J. Allen Hynek’s Center for UFO Studies. You can subscribe to all of The Debrief’s podcasts, including audio editions of Rebelliously Curious, by heading over to our Podcasts Page.

Video News: Be sure to catch Chrissy Newton’s latest installment of Rebelliously Curious, where she sits down with CEO and Co-Founder of Electric Sky Robert Millman to discuss the “Whisper Beam” and the future of faster, cleaner, quieter, and more economical travel. You can also watch past episodes and other great content from The Debrief on our official YouTube Channel.

And with that all out of the way, it’s time we shift our attention over to the new AI chatbot Google plans to unveil, the successor to it’s previous (and controversial) bot, and a rival to OpenAI’s ChatGPT.

Google Introduces Bard

This week, Google announced that it would begin to open access to Bard, its new experimental chat AI service.

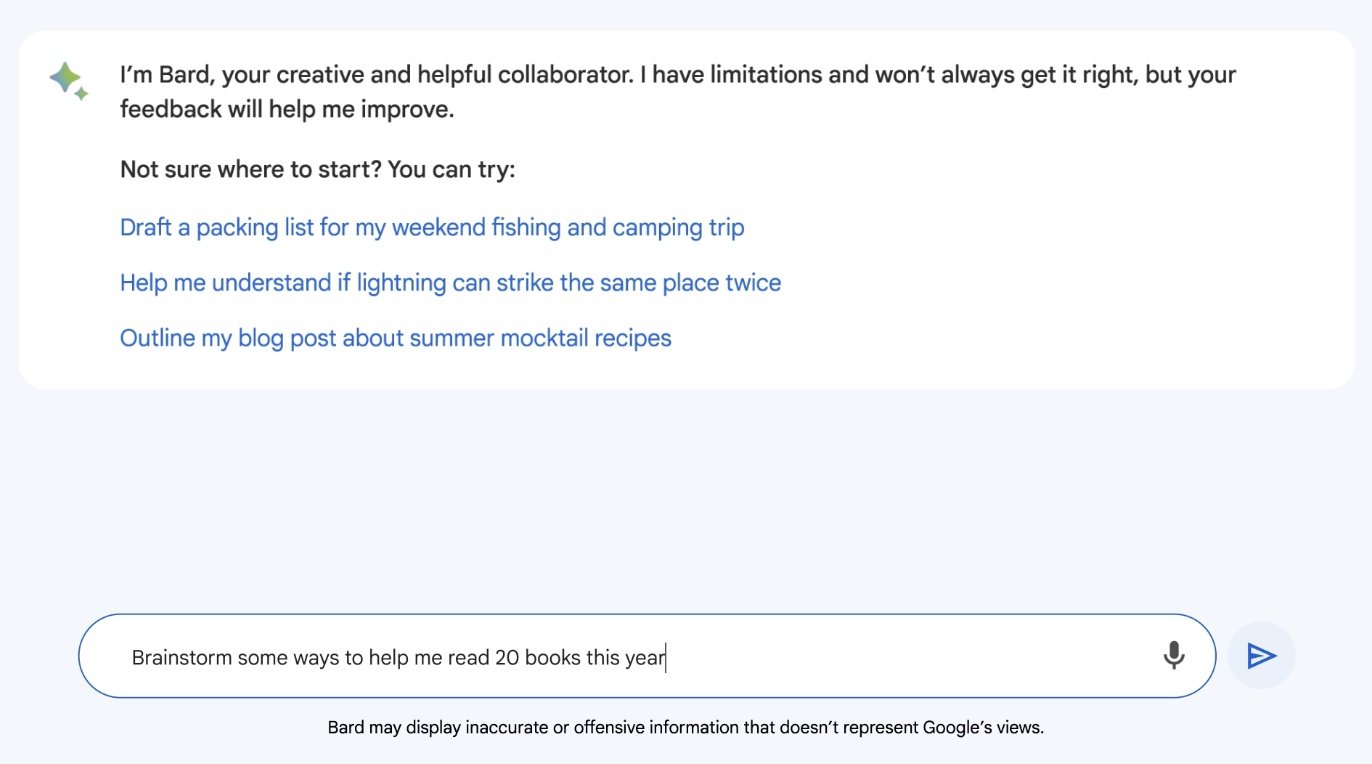

“Today we’re starting to open access to Bard, an early experiment that lets you collaborate with generative AI,” wrote Sissy Hsiao and Eli Collins in a post at Google’s blog The Keyword.

“This follows our announcements from last week as we continue to bring helpful AI experiences to people, businesses and communities,” they said.

According to the company, Bard’s design is primarily as an interface to a large language model (LLM), which the company likens to a kind of prediction engine that powers the AI chatbot.

Similar to ChatGPT, Google’s new Bard AI will function a bit differently, in that the information it draws from will come directly from the World Wide Web, relying in part on Google’s own search engine and algorithmic capabilities.

So what do we know about Bard, how it will work, and how soon it may see full deployment?

Origins of an AI Chatbot

Announced in early February in a post by Sundar Pichai, Google, and Alphabet CEO, the chatbot has deeper origins that extend back another couple of years, with the release of Google’s Language Model for Dialogue Applications (LaMDA). According to Google, Bard will allow its users to collaborate in new ways with generative AI.

“We’ve been working on an experimental conversational AI service, powered by LaMDA, that we’re calling Bard. And today, we’re taking another step forward by opening it up to trusted testers ahead of making it more widely available to the public in the coming weeks,” Pichai wrote on February 6.

By combining Bard with information readily accessible on the Internet and Google’s own large language models, Pichai said that Bard would be both creative, but also capable of providing high-quality responses.

“Bard can be an outlet for creativity, and a launchpad for curiosity, helping you to explain new discoveries from NASA’s James Webb Space Telescope to a 9-year-old, or learn more about the best strikers in football right now, and then get drills to build your skills,” Pichai wrote last month.

A Learning Computer, or a Lying One?

An LLM essentially functions by learning from the data it is provided—in the case of LaMDA and its successor Bard, this is through information from the Web—and improving its ability to guess the correct words. LLMs are designed to display a degree of creativity, which helps them to pick reasonable responses in their predictive word choices.

However, facilitating creativity in this way doesn’t always translate to 100% accuracy. Since an LLM is attempting to be creative with the generation of the text it produces, balanced against accuracy, LLMs can sometimes produce questionable information. Thus, the limitations of existing chatbots are often revealed once the accuracy of answers they provide is checked. Although such AI programs are designed to sound authoritative, that clearly isn’t always the case.

According to a Google fact sheet providing an overview of Bard, “while a user can expect exactly the same and consistent response to a database query (one that is a literal retrieval of the information stored in it), the response from an LLM to the same prompt will not necessarily be the same every time (nor will it necessarily be a literal retrieval of the information it was trained on); all this is a result of the LLM’s underlying mechanism of predicting the next word.”

These factors also reveal why intelligent, accurate-sounding responses produced by chatbots can also include bad information. The question, then, comes down to the purpose of the AI in question: is its role to be an information retrieval system that can provide reliable responses (similar to how Google’s search engine algorithms already attempt to do), or a creative one, which is capable of providing randomized, “real” sounding responses similar to a human response?

Obviously, Google aims to try to strike a balance between these with Bard and to achieve better than other chatbots have managed to do in the past. However, the realization of an accurate chatbot that can realistically mimic human behaviors begins to take us into uncertain territory… and it wouldn’t be the first time Google has found itself a subject of controversy with regard to the AI it is developing.

A Sentient Chatbot?

Last June, Google engineer Blake Lemoine managed to stir up a minor controversy with the issuance of a plea to his employers at Google, asking them to consider whether LaMDA, Bard’s predecessor, might be displaying signs of sentience.

Google downplayed Lemoine’s concerns, saying that sentience being displayed by its chatbot was unlikely. Lemoine was placed on administrative leave following the incident. But were his concerns really warranted after all?

Maybe not. Several AI scholars responded to the claims, warning that Lemoine had essentially mistaken AI’s best ruse—the ability to convincingly mimic human behavior—for being a display of actual sentience by the Google chatbot.

While major concerns about the level of actual intelligence being displayed by machines at the current time may be unwarranted, that isn’t to say we should entirely toss caution to the wind. If Bard ends up being capable of what its creators at Google say it can do, it should represent the most intelligent and factually accurate chatbot in existence.

That all remains to be seen, of course. But like many other Google users out there, I’ve already signed up to be notified when the bot becomes available for early testing, and as is usually the case, readers of The Intelligence Brief will be the first to hear about how that goes. In the meantime, I’d be interested in hearing about your experiences also: if you have a tip or other information you’d like to send along directly to me, you can email me at micah [@] thedebrief [dot] org, or Tweet at me @MicahHanks.

But for now, that concludes this week’s installment of The Intelligence Brief. You can read past editions of The Intelligence Brief at our website, or if you found this installment online, don’t forget to subscribe and get future email editions from us here.

Here are the top stories we’re covering right now…

- Is `Oumuamua a Hydrogen-Water Iceberg?

Was `Oumuamua a hydrogen-water iceberg? Harvard Astronomer Avi Loeb weighs in on a recent paper on the mysterious interstellar space object.

- Department of Energy Scientists Achieve the Impossible with Major Breakthrough in Ultrafast Beam-Steering

Scientists in New Mexico say they have mastered ultrafast steering of light pulses from conventional, “incoherent” sources in a major scientific breakthrough.

- First Detection of Neutrinos in a Particle Collider Reported by California Physicists in a Scientific First

Physicists in California have reported the detection of neutrinos in a particle collider, marking a new breakthrough development in physics.

- DARPA Wants to Revolutionize Amphibious Warfare By Developing An Entirely New Class of Flying Boat

DARPA announced it is moving forward with plans to develop an entirely new class of flying boat for use in amphibious military operations.

- Powerful New Multi-role Electronically Scanned Array (MESA) Sensor Set to be Delivered to U.S. Air Force

The U.S. Air Force is set to be equipped with a formidable new combat-proven electronically scanned array sensor system, which will enhance both air and maritime sensing capabilities.

- Comprehensive Climate Change Report Warns That World Leaders Must Take Action to Limit Severe Weather Events

The United Nations is calling on governments around the world to take urgent action in the face of climate change, according to a new report that says current efforts to combat the crisis are insufficient.

- One Small Step for Man’s Garbage: Space Junk, EV Aircraft, and Monitoring Earth’s Pollution with Satellites This week on The Debrief Weekly Report…

This week on ‘The Debrief Weekly Report’, we look at NASA and the Italian space agency’s mission to monitor pollution, and how scientists around the world want to update the space treaty to include space junk.

- Breakthrough Study of Anomalous Photoemission Challenges Our Current Understanding of the Phenomenon

Physicists report a discovery they say could upend our long-held scientific views involving the phenomenon that causes the photoelectric effect.

- Lockheed Martin Won’t Deny The Existence of This Mysterious Hypersonic Spy Plane

Lockheed Martin continues to hint that the “Darkstar” hypersonic jet in the film Top Gun: Maverick might actually exist.

- Pentagon Space Mission Aims to Test Laser Power Beaming in Space

This week, we look at the NRL’s efforts to use laser power beaming with its Space Wireless Energy Laser Link (SWELL).

- The Hunt for an Invisible Killer: Colorado’s Mystery Cattle Deaths

This week we present the results of an investigation into mysterious cattle deaths in Colorado based on official documents and interviews.

- Pentagon Releases Video of Russian Fighter Jet Forcing Down U.S. Surveillance Drone

Dramatic video from the Tuesday encounter between an American MQ-9 Reaper surveillance drone and a Russian fighter jet over the Black Sea.