Researchers have used infrared (IR) imaging and machine learning to turn monochromatic “night vision” images into their actual colors. Designed to learn and analyze the spectral signatures of individual IR wavelengths, the new system could one day offer full-color night vision tools for military applications, security professionals, and wildlife observers.

CURRENT NIGHT VISION TECH IS MONOCHROMATIC

Humans see things in the visual light spectrum, allowing for the fantastic array of colors and textures perceived by the human eye. Night vision technologies mimic this ability by capturing non-visible infrared light and converting that data into a visible signal the human eye can interpret. Unfortunately, the information gathered by infrared systems limits these images to a monochromatic representation, robbing the target of its color.

Some advancements in IR technology have improved their sensitivity. However, images are still represented in green, black, and white, with the full palate of colors unavailable to the night vision user. Now, researchers from the University of California, Irvine, have cracked the code, potentially opening the door to full-color night vision technologies.

MACHINE LEARNING BRINGS THE COLOR BACK

“Humans perceive light in the visible spectrum (400-700 nm),” explains the published study. “Some night vision systems use infrared light that is not perceptible to humans, and the images rendered are transposed to a digital display presenting a monochromatic image in the visible spectrum.”

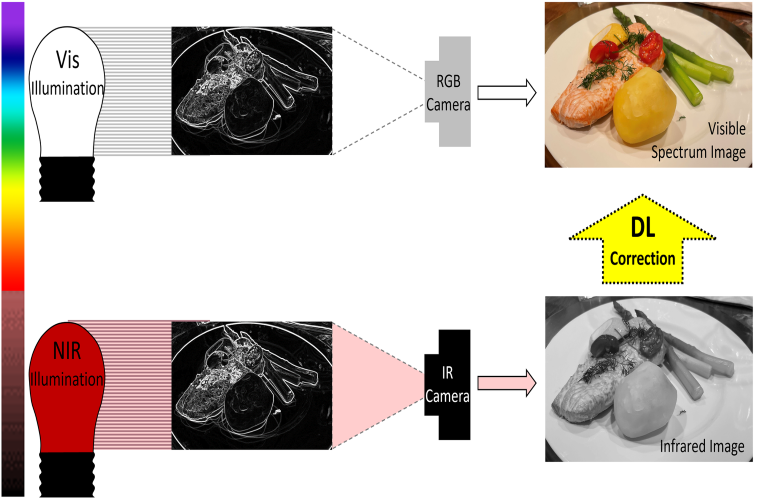

This presentation of night vision data is severely limited, especially in color data. Therefore, the researchers behind the study “sought to develop an imaging algorithm powered by optimized deep learning architectures whereby infrared spectral illumination of a scene could be used to predict a visible spectrum rendering of the scene as if it were perceived by a human with visible spectrum light.”

In short, they wanted to see if they could gather enough data from an infrared image to “teach” a smart computer how to turn that image back into full color. “This would make it possible to digitally render a visible spectrum scene to humans when they are otherwise in complete “darkness” and only illuminated with infrared light,” the study explains.

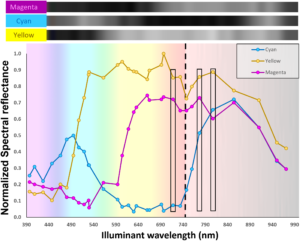

Images of each dye were captured by a monochromatic camera and grayscale reflectance intensity sequentially arrayed in the top inset.

The researchers used a monochromatic camera sensitive to both visible and near-infrared light to make this technological breakthrough. The particular camera took pictures of printed images of human faces under standard red, green, and blue wavelengths and infrared wavelengths.

“We acquired photographs of each image under different wavelengths of illumination to then be used to train machine learning models to predict RGB color images from individual or combinations of single wavelength illuminated images,” the research paper explains. “We then optimized a convolutional neural network (CNN) with a U-Net-like architecture to predict visible spectrum images from only near-infrared images.”

The system worked extremely well, successfully recreating the source image in full color using only the monochromatic infrared file.

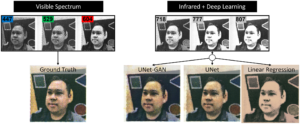

(left) Visible spectrum ground truth image composed of red, green and blue input images. (right) Predicted reconstructions for UNet-GAN, UNet and linear regression using 3 infrared input images.

“In short, this study suggests that CNNs are capable of producing color reconstructions starting from infrared-illuminated images, taken at different infrared wavelengths invisible to humans,” the study concludes. “Thus, it supports the impetus to develop infrared visualization systems to aid in a variety of applications where visible light is absent or not suitable.”

COLOR NIGHT VISION HAS MANY POSSIBLE APPLICATIONS

The researchers behind the study note that while their process was a success, the actual interpretation of spectrographic wavelength data for each color was only part of the equation. Instead, the machine learning was at least as necessary, with the researchers writing that “predicting high-resolution images is more dependent upon training context than on spectroscopic signatures for each ink.”

Still, the researchers feel their work was a critical first step and proved this type of conversion of infrared images into color was feasible.

“This proof-of-principle study using printed images with a limited optical pigment context supports the notion that the deep learning approach could be refined and deployed in more practical scenarios,” the study concludes. “These scenarios include vision applications where little visible light is present either by necessity or by goal.”

Follow and connect with author Christopher Plain on Twitter: @plain_fiction